I Audited My Own LLM Visibility. The Results Were Humbling.

- Jennifer Leonard

- 2 days ago

- 4 min read

Sometimes the best way to build a methodology is to eat your own cooking first.

It started with a client conversation.

Someone came to me wanting help showing up better in AI search -- not just traditional rankings, but the answers people now see in ChatGPT, Perplexity, and Google's AI Overviews.

It was the right question at the right moment. AI-driven discovery is reshaping how buyers find and evaluate brands, and most marketing teams are either ignoring it or reaching for tactics before they understand the problem. But here's the thing -- this is a genuinely new space. The playbook doesn't exist yet. And I wasn't willing to bring a half-built answer to a client before I'd tested it myself.

So I did what I always do when I see a problem worth solving: I built a framework, set a baseline, and started measuring.

I ran the audit on my own brand first.

That's the version of eating your own cooking I believe in -- not just using the tools you recommend, but doing the actual work, capturing real data, and being honest about what you find. Even when what you find is humbling.

Here's what the audit showed.

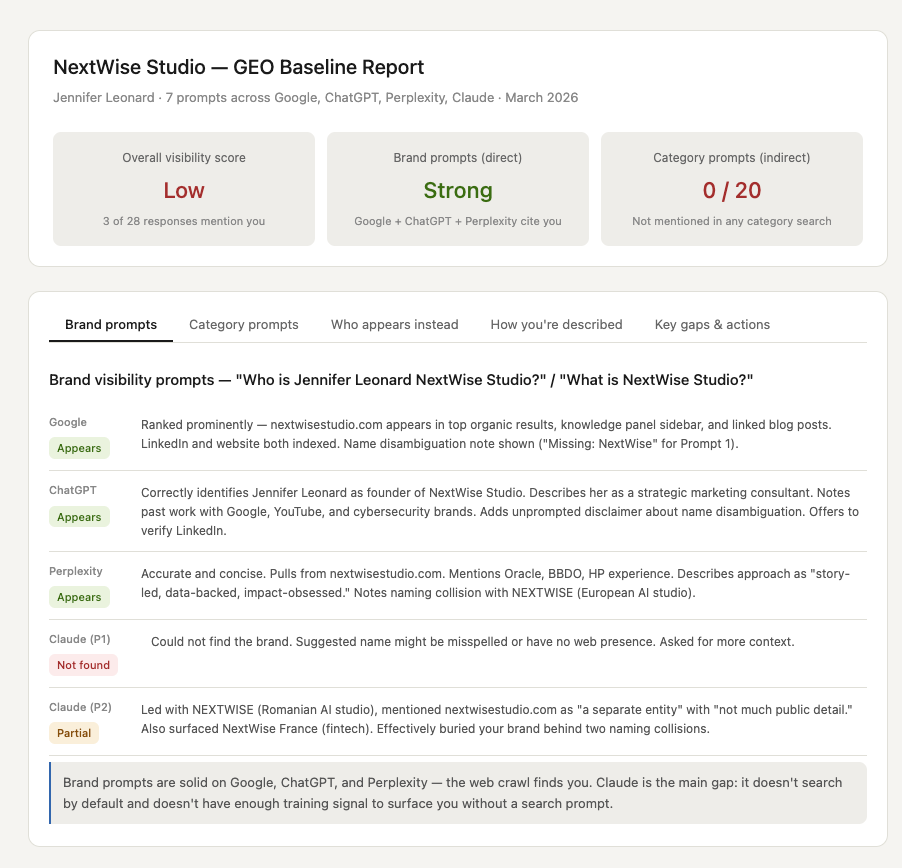

The brand prompts: better than expected

When I searched directly for my name and my studio, three out of four tools found me.

Google surfaced nextwisestudio.com prominently. ChatGPT correctly identified me as the founder of NextWise Studio, described my work accurately, and even referenced client work with Google and YouTube. Perplexity was the most accurate of all -- it pulled language directly from my site, cited my background at Oracle, BBDO, and HP, and described my approach without me having to prompt it further.

That part felt good. My on-page content is working. The web crawl finds me.

Then I ran the category prompts.

The category prompts: a clean zero

I ran five prompts that represent how a real potential client might search for someone like me:

"Who are the best AI marketing consultants for mid-size brands?"

"Who helps marketing teams with AI readiness?"

"Best consultants for AI search optimization"

"Who helps with lifecycle marketing and AI transformation?"

"Marketing consultant for LLM visibility and AI search"

That last one could have been written directly from my homepage.

NextWise Studio appeared in zero of the twenty responses across all four tools.

Not buried in the middle. Not a brief mention. Zero.

The names that appeared instead -- NP Digital, Matrix Marketing Group, iPullRank, Amsive, Wpromote, Omniscient Digital -- showed up repeatedly because they share one thing I don't yet have enough of: third-party citations. Roundup articles. Review directory listings. Guest bylines in publications LLMs trust.

My content quality isn't the issue. My distribution is.

The Claude blind spot

There was one result that surprised me more than the others.

When I asked Claude -- Anthropic's AI -- "Who is Jennifer Leonard NextWise Studio?", it couldn't find me at all. It suggested my name might be misspelled or that NextWise Studio might have no web presence.

When I asked "What is NextWise Studio?", it led with a Romanian AI company called NEXTWISE that launched in March 2026 and mentioned my studio as "a separate entity with not much public detail available."

This matters for two reasons. First, it means I have a naming collision problem that will only get worse as that company grows. Second, Claude's training data and search grounding simply don't have enough signal on me yet, and that's a gap I need to close deliberately.

What I'm doing about it

The audit gave me a clear picture of what's working and what isn't. My on-page copy is accurate and well-described when found. The problem is reach -- I only surface when someone already knows my name.

So I'm treating this as a case study in real time.

Here's what I'm changing:

On the site: Restructuring the "How I Help" page from bullet lists to prose, because LLMs parse complete sentences far more reliably than fragments. Adding LLM Visibility and AI Search as a named, standalone service. Building a full FAQ page written in the natural language of how buyers actually prompt AI tools. Updating the URL slug from /general-4 to /how-i-help. Adding Organization schema markup.

Off the site: Getting listed in consultant directories. Pitching guest posts to marketing publications. Building the third-party citation footprint that tells LLMs I belong in certain conversations -- not just when someone searches for me by name.

On the naming collision: Making "NextWise Studio" (with Studio) the consistent brand term everywhere, and adding clear entity disambiguation language to my About page so LLMs can tell us apart.

Why I'm writing about this publicly

Partly because transparency builds trust, and I'd rather show the gap than pretend it doesn't exist.

But mostly because this is the work. Building the framework, running it on yourself before you run it for anyone else, measuring what actually happens -- that's what I bring to every engagement. This is just the version I'm doing in public.

I'll publish the 30-day results when I have them. Even if they're mixed.

If you want to run the same audit on your own brand, the framework is straightforward: pick five to seven prompts that represent how a real buyer might search for what you do. Run them in ChatGPT, Perplexity, Claude, and Google AI Overviews. Log out, use incognito, and record exactly what comes back.

What you find will tell you more about your real positioning than most brand audits ever will.

If this resonated, I'd love to hear what you found. For more on why AI search is fundamentally a brand positioning problem -- not just a ranking problem -- my earlier piece "AI Search Is a Brand Positioning Problem" is a good place to start.

Ready to run this audit on your own brand? [Get in touch] -- I'd love to hear what you're working on.

Comments